IETF RFC 5481 requires the use of packet delay variation (PDV) in measurements.

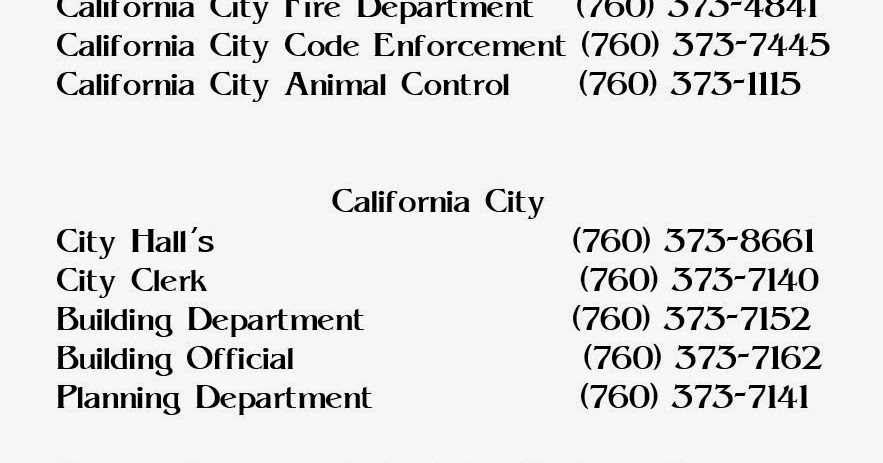

However, the transport time variation is as important as the median rate and a jitter indicator is to determine this variation. The majority of packets clearly had latency in a narrow band above the lower limit while being much faster than the most delayed packets. Two-way packet latency in a 30 s packet stream had a slight log-normal distribution. Such intermediate buffering not only happens at the UDP and IP level, but also in lower layers as transport blocks. This scenario quickly reveals that latency is not a constant for a given connection or mobile channel, since transport packets are buffered and queued at several stages before traveling onward. This result is very similar to the previous blog entry but this time uplinks and downlinks used 5G carriers with a 5G non-standalone connection, resulting in shorter two-way latency of 10 ms. We transferred packets for 30 s with a bitrate of 300 kbit/s and measured the two-way latency from the transmitting mobile client to the reflecting server and back. This architecture prevented additional delays from upper layers in the Android operating system and acted as a technical measurement or application most resembling a real-time interface for a mobile client. The packets were directly sent and captured using an IP-socket interface in a state-of-the-art smartphone. We selected packet frequencies and sizes typical for real-time applications. We transported thousands of UDP packets to a network server and back. It is based on the user datagram protocol (UDP) applied for most real-time network communications. To obtain realistic latency measurements, a typical data stream for real-time applications was emulated as using the two-way active measurement protocol (TWAMP) in line with IETF RFC 5357. Real-time capability is not determined by the fastest packets delivered but by those delivered last. Even though radio link technology supports very short latency, this needs to be true for each packet and we must determine performance when moving as well under imperfect radio channel conditions. URLLC requires a server at the network edge that is perfectly connected to the core network. Ultra-reliable, low latency communications (URLLC) that enable very short latency periods over radio links are a key promise of 5G technology. The changes mainly stem from the user moving around but can also come from suboptimal coverage and congested networks.Īgain, data transport latency is one of the most important factors in interactive and real-time use cases in mobile networks. Now, we will make a more granular analysis of how long is needed to transport data packets in mobile channels when the network conditions are constantly changing. Recently, we discussed how distance to the server can impact latency and jitter. This study looks at data latency in real-field mobile networks.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed